Adding A CI/CD process to my work flow is one of the really quick wins I do on every serious project I work on.

Whilst most of my personal work is hosted on Gitlab, a recent project I was working had its code in Bitbucket. This was my first time working with Bitbucket, so I wanted to document how I built assets, linted and ran tests using its pipelines.

What will these pipelines do?

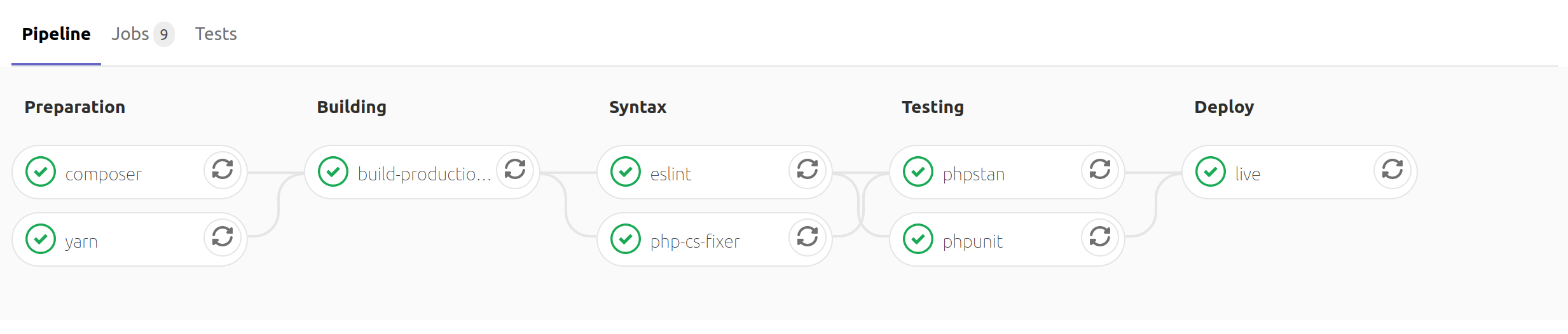

By the end of this article you should have a working bitbucket-pipelines.yml file for your Laravel project which will do the following:

- Use

composerto install your projects dependencies - Run

php-cs-fixerto enforce a code style - Run

larastanto run static analysis against the code base - Run

php-cs-fixerandlarastanin parallel - Run

phpunitto run our projects test suite - For a production build

yarn run production - Allow us to manually trigger a deployment to production using Laravel Deployer.